On-Cloud Setup

ML Workbench on Cloud is designed to require minimal setup.

Currently in its preview release, the Cloud version of ML Workbench is available by invitation only for a TigerGraph Cloud instance. If you are interested in experimenting or developing with the preview release of ML Workbench on Cloud, please contact sales@tigergraph.com.

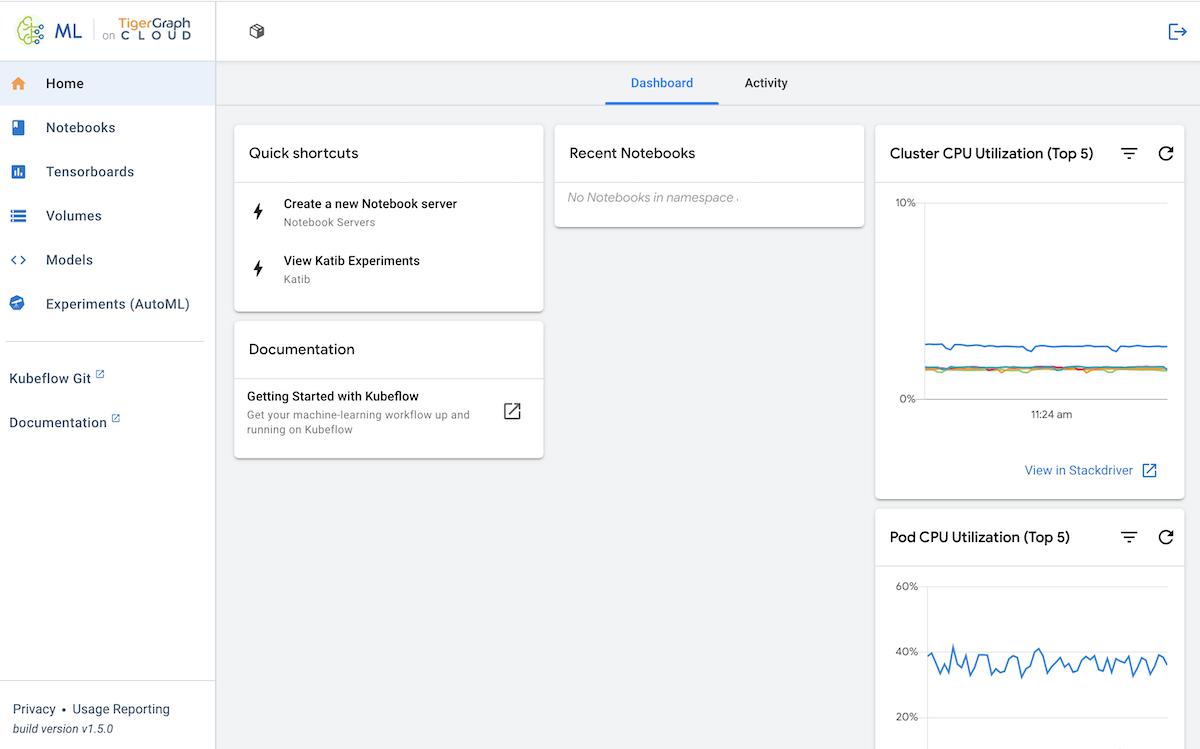

It is built on a Kubernetes/Kubeflow framework that enables notebook creation, Tensorboards, and model serving from one integrated platform.

This documentation describes some of the most common functions for performing machine learning operations with a TigerGraph Cloud instance. For more detailed documentation of the Kubeflow platform, please see the Kubeflow documentation.

Example Business Workflow

This is an example workflow to illustrate a use case for each component of ML Workbench on Cloud.

-

A business organization uses TigerGraph Cloud to create a database for their complex data.

-

To solve a business problem with the data stored in their Cloud database, they decide to build a machine learning model.

-

They use the ML Workbench Notebook feature to create a Python notebook connected to their TigerGraph instance in order to train the model.

-

A Tensorboard helps them visualize the process of training the model, and AutoML helps them tune the hyperparameters.

-

Once the model is trained, they can interact with it using their custom Python code in the Notebooks or use it as an API with the Model Server.

First launch

Access ML Workbench from the TigerGraph Cloud main page.

| Your TigerGraph Cloud account must have access to a TigerGraph Cloud solution in order to connect that solution to an ML Workbench notebook. |

The first time anyone in a new TigerGraph Cloud organization accesses ML Workbench, they will see a prompt for a namespace. This namespace is analogous to a project name, managing the connection of one or several TigerGraph Cloud solutions with one or several ML Workbench tools.